The Coordination Layer: How AI Agents Work Inside Programmable Media

This is article 4 of the series "The Infrastructure for Programmable Media."

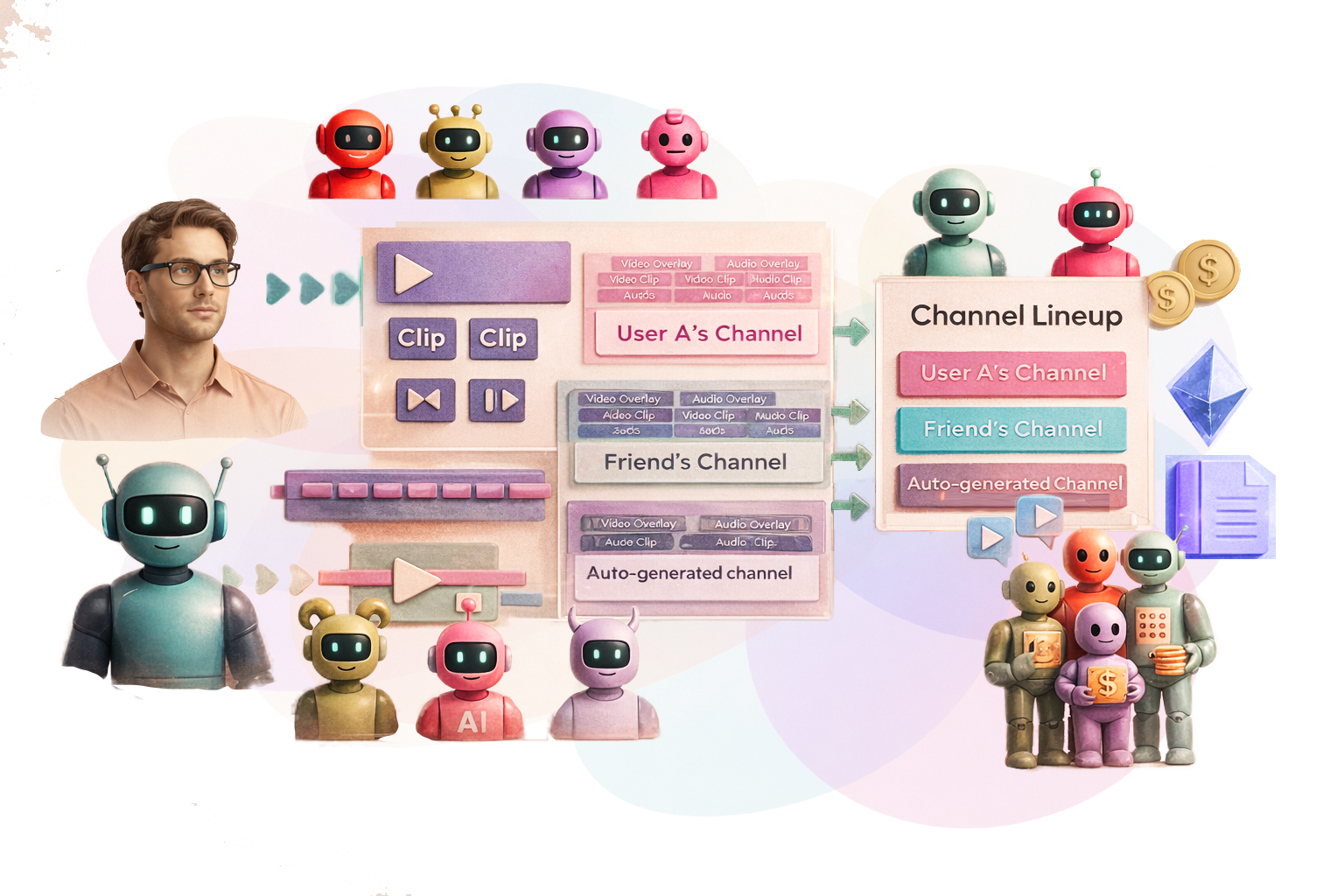

So far in this series we covered why the old media distribution infrastructure breaks, what it looks like to build media in layers instead of flat files, and what opens up when premium content allows remixing and variations that fork and evolve while staying connected to their original source. It turns out that combining clips of media from several different sources and tracking where the media came from creates more opportunities for revenue generation, discoverability, enjoyability and a stronger connection to the original content, along with a stronger connection to niche communities. But at some point, making all these new media mixes with multiple variations goes from being an editorial labor of love to an overwhelming pain point. Since we live in a modern world with new technology arriving each day, this has an easy solution: using AI agents to support and fill the role of the content producer.

What an AI agent actually does here

The most common idea of AI's role in media is generative. People expect tools that write scripts, produce images, and make video clips from prompts. That is a possibility in the programmable media world, but it would be easier to describe the AI content producer's role if you put that idea to the side for now.

Think instead about what a human editor, curator, or licensor actually does. They search for material, edit it based on context and audience, shape it into something new, layer in metadata and supporting media elements, publish it across platforms, and track how it performs so they can do it better next time. Somewhere in that process they also set their payment terms and rights permissions, and once content is live they view the results to learn what to do next time based on what works.

An AI content producer agent does all of that, but works across a much larger range and does it continuously. An agent can:

search internal libraries and external sources,

curate and edit material based on viewer preferences and engagement data,

assemble clips of media into layers and bigger mixes, such as Dynamic Multilayered Media Structures,

generate new elements if it’s a better fit for the end user,

sequence and schedule content across channels, and

execute payments and attribution records on behalf of every stakeholder involved, and

learn from outcomes to refines its judgment over time based on observed performance across users, devices, and environments.

What makes this different from a general-purpose AI tool is the range of the system the agent can address. Most agentic frameworks operate at a single level of abstraction. This one works across hardware commands to physical devices, raw media elements, compiled sequences, full layered structures, and complete channel lineups, all simultaneously. The same agent can adjust an IoT-connected light in response to a tempo change in the audio layer and restructure an entire channel lineup based on real-time engagement data, sometimes in the same action.

Here’s an example of how a video podcaster or livestream creator can work wtih programmable media and AI agents. The agent can monitor engagement in real time, timestamp the highest-interaction moments, and queue them as micro-clip candidates. After the stream ends it can assemble variations from that material: a highlight reel, a tutorial sequence, promotional vertical clips, each formatted for the devices the audience prefers to use, with non-cleared audio swapped out automatically. The creator can focus on making original content while the agent handles the rest.

The same logic applies when the destination is an outdated a legacy environment. Sometimes the best audiences are still on social feeds that expect a flat video file, or an older broadcast system, or a newsletter that just needs a thumbnail. In these scenarios the agent can do its multi-layered magic and collapse the structure into whatever format is required. The flattened export is recorded in the version history as a derivative, the full source stays intact, and if the destination ever upgrades, the complete layered structure is there waiting to be re-expanded.

How sponsorship fits in

Because these are clips and compilations that link back to their sources, integrating a sponsorship (ad) into this system has more options. The basic process involves the agent generating, editing and distributing sponsored content within specific layers, channels, or lineups. This is done based on predictive analytics, stated preferences, inventory categories, algorithm and AI decisioning, and real-time payment calculations. A brand layer gets inserted because the system determined it was relevant to the viewer, the channel, and the moment, and the economics of that placement are calculated and recorded automatically at the same time it plays. How the full sponsorship and economics layer works across the ecosystem is covered in a later article in this series.

Built with guardrails in mind

In order for AI agents to operate at scale with big money flowing through them, they need to follow the rules, which travel with the media structure itself. You might be surprised to hear how AI agents are still in the uncontrolled “wild west” phase, with only 21% of companies have a mature governance model for agents (and that doesn’t include many independent entrepreneurs and hobbyists who value moving fast over following a compliance system). So the best way to provide protection and trust is to make sure that these AI content producers have standards. For example, agents in this system cannot:

combine two elements if the licensing rights don't permit it,

serve content if regional licensing blocks it,

place a brand layer if the channel's settings prohibit sponsorship,

override creator rights settings,

pass protected user data to advertisers and external partners without consent,

make financial settlements or smart contracts without authorization, and

modify a media structure without recording the modification in the version history.

An agent that can do anything without controls is a big fat liability. Every action the agent takes should be recorded in the data profile of the media clips and mixes it touched, keeping the system auditable at every step (while protecting privacy). Governance baked into the architecture is what allows the agent to operate semi-autonomously without anyone losing sleep over what it's doing.

What It Looks Like When AI Agents Help Media to Adapt, Learn, and Redistribute Itself

Media is shifting from fixed outputs to self-evolving systems. As that shift accelerates, the challenge moves from creation to coordination and interactivity. It’s too much for humans to handle individually, so human-in-the-loop collaborations and AI content producer agents fill that gap by assembling, adapting, and distributing media while preserving attribution, enforcing rights, and keeping humans in control of the terms.

Once attribution becomes precise and fractional payments are automatic, the type of content creators make could start to shift. Art has always evolved alongside the tools used to make it. Photography didn't kill painting, it encouraged painters to move toward abstract ideas/ Sampling didn't kill music, it made some great bops. Programmable media is likely to do something similar. If an isolated vocal track, an unedited drone shot, or a green-screened outline of someone dancing each generate a fraction of a payment every time an AI agent anywhere in the world uses them in a mix, the incentive to create finished works changes. There may be more cumulative value in producing high-quality raw modular ingredients than in painting a final piece.

And when AI agents are coordinating media at scale, the question of “who gets paid for what?” becomes something the system needs to answer automatically. That is the economics layer, and it is where the next article picks up.